Architecture

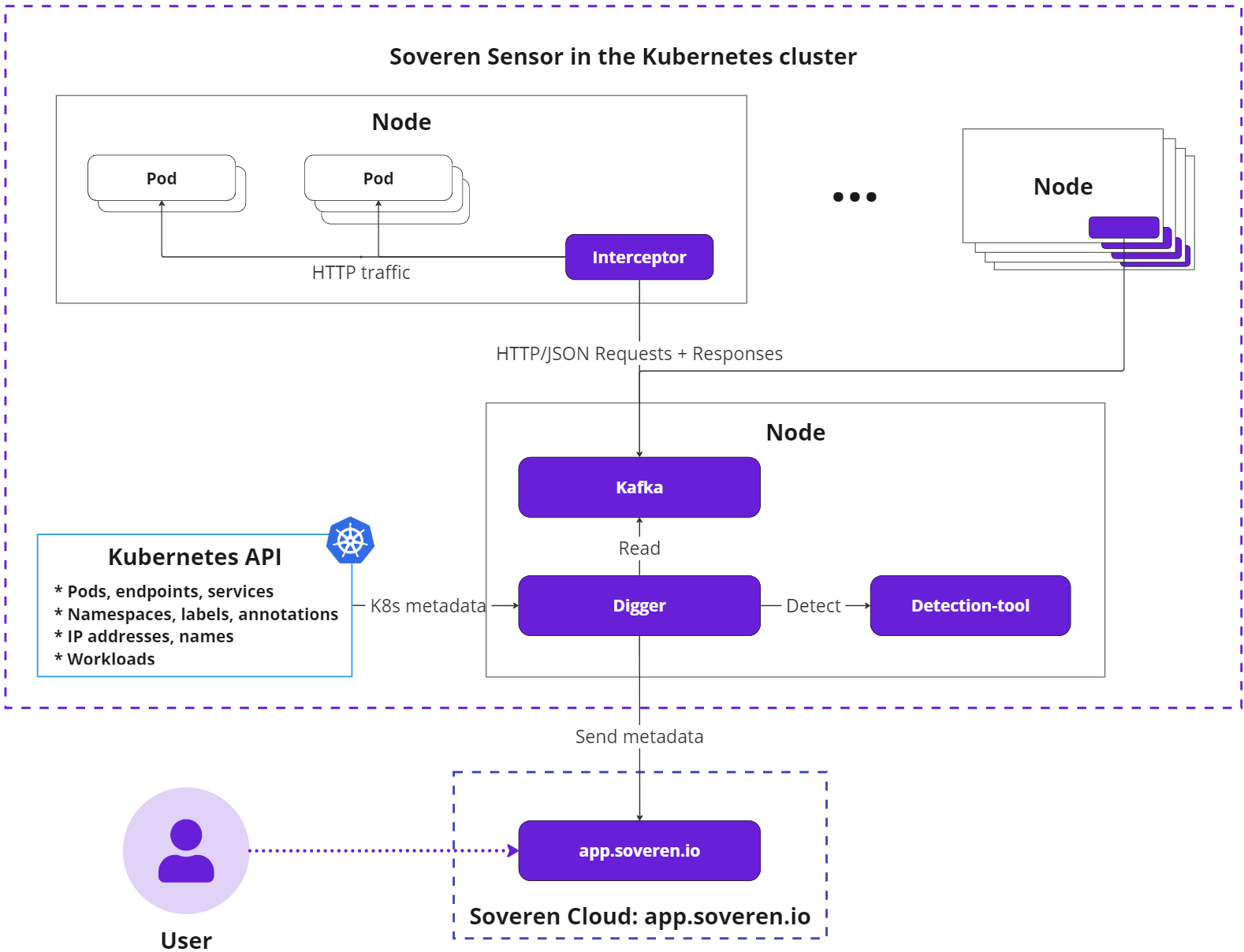

Soveren is composed of two primary components:

- Soveren Sensor: Deployed within your Kubernetes cluster, the Sensor intercepts and analyzes structured HTTP JSON traffic. It collects metadata about data flows, identifying field structures, detected sensitive data types, and involved services. Importantly, the metadata does not include any actual payload values. The collected information is then relayed to the Soveren Cloud.

- Soveren Cloud: Hosted and managed by Soveren, this cloud platform presents user-friendly dashboards that provide visualization of sensitive data flows and summary statistics and metrics.

Soveren Sensor

The Soveren Sensor comprises several key parts:

- Interceptors: Distributed across all nodes in the cluster via a DaemonSet, Interceptors capture traffic from pod virtual interfaces using a packet capturing mechanism.

- Processing and messaging system: This system includes a Kafka instance that stores request/response data and a component called Digger which forwards data for detection and eventually to the Soveren Cloud.

- Sensitive data detector (Detector): Employs proprietary machine learning algorithms to identify data types and gauge their sensitivity.

In Kubernetes terms, the Soveren Sensor introduces the following pods to the cluster:

- Interceptors: One per worker node;

- Kafka: Part of the Processing and messaging system, deployed once per setup;

- Digger: Another component of the Processing and messaging system, deployed once per setup;

- Detection-tool (Detector): Deployed once per setup.

We also employ Prometheus Agent for metrics collection, this component is not shown here.

Let's delve deeper into the main components' operations and communications.

The Soveren Sensor follows this sequence of operations:

-

Interceptors collect relevant traffic from pods, focusing on HTTP requests with the

Content-Type: application/jsonheader. -

Interceptors pair requests to individual endpoints with their respective responses, creating request/response pairs.

-

Interceptors transfer these pairs to Kafka using the binary Kafka protocol.

-

Digger reads the request/response pair from Kafka, evaluates it for detailed analysis of data types and their sensitivity (employing intelligent sampling for high load scenarios). If necessary, Digger forwards the pair to the Detection-tool and retrieves the result.

-

Digger assembles a metadata package describing the processed request/response pair and transmits it to the Soveren Cloud using gRPC protocol and protobuf.

The Kubernetes API provides pod names and other metadata to the Digger. Consequently, Soveren Cloud identifies services by their Kubernetes names rather than IP addresses, enhancing data comprehensibility in the Soveren app.

Soveren Cloud

Soveren Cloud is a Software as a Service (SaaS) managed by Soveren. It provides a suite of dashboards displaying diverse views into the metadata collected by the Soveren Sensor. Users can view statistics and analytics on observed data types, their sensitivity, involved services, and any violations of predefined policies and configurations for allowed data types.